Alternatives to Cisco for 10 Gb/s Servers

2012-02-27 13:45

networking

This is a post written in response to Chris Marget’s well done series “Nexus 5K Layout for 10Gb/s Servers”. While I appreciate the detail and thought that went into the Cisco-based design, he’s clearly a “Cisco guy” showing a teensy-weensy bit of bias toward the dominant vendor in the networking industry. The end result of his design is a price that is over $50K per rack just for networking - approaching the aggregate cost of the actual servers inside the rack! Cisco is the Neiman Marcus of the networking world.

So I came up with an alternate design based on anything-but-Cisco switches. The 10G switches from Arista here use Broadcom’s Trident+ chip, which supports very similar hardware features as the Cisco solution (MLAG/vPC, DCB, hardware support for TRILL if needed in the future, etc.). Many other vendors offer 10G switches based on this merchant silicon, such as Dell/Force10 and IBM. Because this is commodity switching silicon which BRCM will sell to just about anyone (like an x86 processor), pricing would be similar for Trident+ solutions from other vendors1.

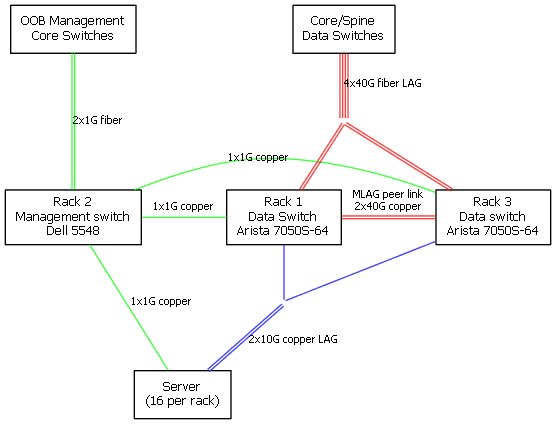

Like Chris, I also include a non-redundant 1G layer 2 switch for out-of-band management of servers and switches, in this case a Dell 5548. I have followed his design by organizing into 3-rack “pods”, each containing sixteen 2U servers that each have dual-port 10 Gbps network with SFP+ ports. All needed optics are included. Server-to-switch copper cabling is not included in the pricing, nor are the fiber runs. Switch-to-switch Twinax cables are included. Here’s a quick diagram, note that the “core” switches are not included in the costs, but the optics used on those core switches are:

Made with Graphviz in 5 minutes because drop shadows don’t add information to a diagram!

| Vendor | SKU | Desc | Qty | List$ | Extend$ |

|---|---|---|---|---|---|

| Arista | DCS-7050S-64-R | Arista 7050, 48xSFP+ & 4xQSFP+ switch, rear-to-front airflow and dual 460W AC power supplies | 2 | 29995 | 59990 |

| Artisa | QSFP-SR4 | 40GBASE-SR4 QSFP+ Optic, up to 100m over OM3 MMF or 150m over OM4 MMF | 8 | 1995 | 15960 |

| Arista | CAB-Q-Q-2M | 40GBASE-CR4 QSFP+ to QSFP+ Twinax Copper Cable 2 Meter | 2 | 190 | 380 |

| Dell | 225-0849 | PCT5548, 48 GbE Ports, Managed Switch, 10GbE and Stacking built-in | 1 | 1295 | 1295 |

| Dell | 320-2880 | SFP Transceiver 1000BASE-SX for PowerConnect LC Connector | 4 | 169 | 676 |

| subtotal | 78301 | ||||

| per rack | 26100 |

As you can see, the costs are roughly half that of the Cisco Nexus 5000-based solution, at just over US$26K per rack (list pricing) versus just over $50K per rack in Chris’s favored design. The total oversubscription ratio is the same as Chris’s design as well, although we have 4x40G links going to the core here instead of 16x10G as in his design. 40G links can be broken out into 4x10G links in any case with a splitter cable if your core is not capable of 40G, or you want to use a “wider” Clos-style architecture with more than two core/spine switches. You’ll need to do layer 3 access or TRILL to take advantage of that design, though.

Note also that the control and management planes of the two Artista 7050S switches remain independent for resiliency: this is not a “stacked” configuration. There is also 80 Gbps available between switches on the MLAG peer link in the event of uplink or downlink failures that cause traffic to transit the peer link (which it would not do in normal operation). Assuming, as Chris does, that your core switches support MLAG/vPC, the uplinks are all active and form a 4x40G port channel to the network core.

Finally, if you want to do 10GBASE-T instead of SFP+/twinax to the servers, you can get away with spending less than $20K per rack! Arista’s 7050T-64 switch is basically the same as the 7050S-64, but has 48 10GBASE-T ports instead of the 48 SFP+ ports. And it lists for just $20995. If you assume like everyone else that servers will soon have 10GBASE-T “for free” on the motherboard, that is proibably the way to go.

Full disclosure: I am not affiliated with any networking equipment vendor in any way, except as a small customer. I might indirectly own stock in one or more of the companies mentioned here via mutual funds, but if I do, I am unaware of it. I pay mutual fund managers to make those decisions for me, thereby stimulating the luxury-sedan/yacht/country-club sector of the economy.

1. Except Cisco, of course. They want $48K (list) for their Nexus 3064 which is based on the older “non-plus” version of the Broadcom Trident, and will therefore never support TRILL or DCB in hardware.

Comments:

[Anonymous]( “noreply@blogger.com”) -

I’m a Cisco guy? Ugh.

My posts tend to be about what I’m working on, and yeah… My customers tend to be blue.

It’s a small nit, but it was important in my layout: I included optics for the “core” end of the links in all of my pricing exercises.

It’s not important here, except to make a fair price comparison, but it mattered in my examples because different designs called for wildly differently priced optics.

Throw in another $8300 for core-end QSFP and SX modules and we’ll be on the same page :-)

Also interesting: Cisco and Arista don’t seem to agree about which end of the switch is the “front”.

Cisco: Front is the end with the power supply

Arista: Front is the end with the ports.

Awesome, thanks guys.

It may be the bias of which I’ve been recently accused, but I’m more sympathetic to the Cisco view on this point. These are top-of-rack switches. Nobody is going to claim that the wiring side of a server rack is “the front”

Nice counter example!

/chris

RPM -

@chris I updated to include core optics for both the data and OOB management networks, to make the prices comparable to yours (although I think you assume a copper run of for the managment net in your design, but that’s not material in the total pricing).

[Anonymous]( “noreply@blogger.com”) -

Ahh. “Teensy-weensy bit of bias.” That feels much better :-) /chris